Leading an underfunded team is a challenge most managers will face over their careers. This blog post provides techniques and a framework for delivering impact under such conditions.

Scenario

Your team owns several mission-critical services. Something happens (a reorg, a new leader, attrition, business priority change), and suddenly, half of the team is gone.

Requests for backfill fail — headcount will only become available at some indeterminate future date due to economic conditions/business priorities/re-organization.

You are still responsible for delivering committed projects and maintaining healthy service levels despite the reduced team size. Your challenge is achieving the seemingly opposing goals: delivering value without burning out the remaining engineers.

The typical advice in crunch times is to do less — reduce in-progress work, say no to new asks, and cut future projects. These proven practices work but usually are not enough.

The defense-in-depth strategy helps leaders achieve more, boost morale, and keep the team going.

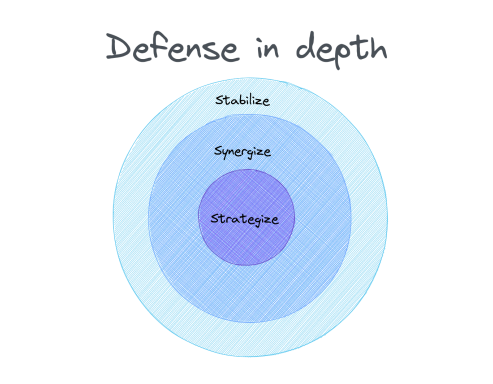

Defense in Depth: Stabilize, Synergize, Strategize

Defense in depth is a strategy in computer security (as well as the military) based upon multiple defensive layers; if one layer is breached, there is another layer to overcome. Attackers must go through all layers to succeed — like peeling all layers of an onion before getting to the core.

The overlapping layers provide coverage for multiple possibilities and eventualities.

Stabilize, Synergize, Strategize

The stabilize, synergize, and strategize approach is a defense-in-depth strategy against the forces of chaos and disorder: you continuously withdraw to the next ring whenever a layer is breached, and it helps you keep your customers and engineers happy.

Layer 1: Stabilize

Eliminate surprises

Comb the backlog to identify critical work and plan adequately. This helps you nip surprises in the bud and eliminate obsolete tasks. In tandem, proactively inform customers about your situation to clarify expectations and minimize disappointments.

Prioritize rigorously

Now that you've cleared the backlog and informed customers, you must raise the acceptance bar. Without this bar, the team will again get overwhelmed with a deluge of requests. Punt all non-critical items during triage; a good heuristic might be to start with no for all incoming requests.

Introduce shield

Set up a rotating roster of engineers who act as first responders and shield the team from disruptive asks. Since all reactive requests will go through the shield engineer, you must clarify expectations around incident handling and triage, response patterns, and shield responsibilities.

Things to watch out for:

- Workload concerns: Ensure the rotation is fair by setting clear guidelines on what gets handled by the shield crew to prevent burnout.

- Inefficiency: The shield engineer might not be the domain expert; however, this is a great way to boost team resilience by democratizing critical knowledge.

- Quality > Quantity: Ensure that every week, the team is getting better; rather than closing multiple items to get to a clean state, concentrate on fixing things permanently. Continuously communicate the Boys' scout rule.

This tactic enables the team to focus on what matters.

Establish Slack

Always have slack (Even in non-crunch periods); there are always surprises, and it is better to be prepared than not. Eventually, some urgent task will slip through all the defenses and require immediate handling. Without slack, you'll have to react and disrupt the entire team.

Prevent this by always having a buffer — some spare engineering capacity in case a fire erupts. Watch out for repeatedly using the same engineer for fire-fighting — it is a fast way to burn out.

Layer 2: Synergize

Seek help

This is not the time to be picky about resourcing; you need all the help you can get. Snap at every opportunity — explore and be creative; for example, can partner teams like SRE or QA help with some of the identified backlog items? Or can they help with day-to-day work and/or surprises when they pop up?

Onboarding exercises are another outlet: identify uncomplicated but essential tasks and reach out to the company-wide onboarding team to seek synergistic options. This often leads to pleasant win-win outcomes: new hires onboard with real work, and your team gets issues fixed. If there is no onboarding team, contact peer EMs with new hires.

Leverage partners

A partner team wants an important feature urgently; unfortunately, your team can't fulfill their request due to the resource crunch. Ask if the partner team can do the work while your team provides support; if they agree, you collaborate and hash out an operating agreement.

This can significantly reduce the burden on your team; for example, instead of having three engineers spend a month building that feature, you can allocate 2 engineers towards reviewing designs, approving PRs, and unblocking the partner team.

Form alliances

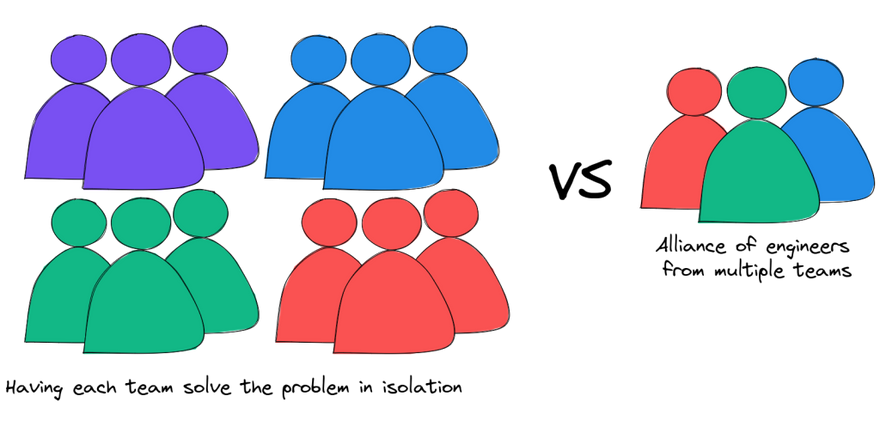

This technique works best for tasks the entire organization has to do; good examples include accessibility fixes or compliance items.

Imagine an organization with 10 teams who all have to execute a specific task to become compliant by a certain date. Typically, the mandate starts from the top and trickles across the ten team managers. In turn, these managers find engineers on their team to do the work — you now have 21 people (1 director + 10 managers + 10 engineers) trying to do the same thing. Add in a few meetings, updates, and status reports, and things get complicated quickly.

Having each team in the organization fund implementation in isolation is inefficient; it leads to repeated ramp-up cycles and the proliferation of bespoke solutions. A more efficient approach is to have a single driver who leads a virtual team to solve the problem efficiently in one broad swathe. The cost in this scenario drops to 5 people (1 director + 1 virtual team leader + 3 engineers), a 4x improvement in capacity costs! In addition to the efficiency, the driver's higher impact and growth are extra benefits.

Layer 3: Strategize

Swarm

Rather than have a few engineers toil endlessly on some mundane easily-repeatable task, consider farming it out to the entire team and completing it in one fell swoop.

A disastrous data durability outage necessitated cleaning up hundreds of stale feature switches. The mechanical clean-up of obsolete flags was soul-numbing and morale-depleting for engineers; a quagmire, no one wanted to do the work, but it had to be done fast to plug a glaring hole. Throwing a few folks at the problem would probably have motivated them to interview elsewhere. Instead, the team swarmed on the dreary task; it was fun as we got to complete it within two days.

Strategic investments

Starting to prioritize and elevate high-leverage multipliers. These investments might take months to pay off, but they are essential. They are capital investments that make teams more effective and efficient.

Caveat: Making a case and getting buy-in for such resource-intensive projects in such a resource-constrained period might be challenging.

Conclusion

The defense-in-depth strategy introduces defense layers that cannot be breached by disruptions. When I used these techniques on a team going through a tough patch, we nullified all surprises over six months — only one disruptive intervention slipped through the three layers. Despite being 50% smaller and owning the same services, the team made significant progress on its primary goals.

Get creative; there is much more you can do if you explore, challenge assumptions, and push the boundaries.

Stabilize. Synergize. Strategize.

Top comments (0)